I’ve been seeing a lot of mentions of Walter Writes AI Reviews and I’m trying to figure out if it’s actually useful or just clever marketing. I’m looking for real user experiences: How accurate are the reviews, is it worth the time and cost, and does it genuinely help you pick better AI tools? Any honest feedback, pros, cons, or alternatives would really help me decide before I invest more time into it.

Walter Writes AI review, from someone who tried to break it

I spent an afternoon pushing Walter Writes AI through a bunch of detectors, and the results were all over the place.

I only had the free version, which limits you to the “Simple” mode. Paid plans add “Standard” and “Enhanced” bypass modes, so maybe those behave different, but I did not pay for those.

Here is what I saw.

First round of testing

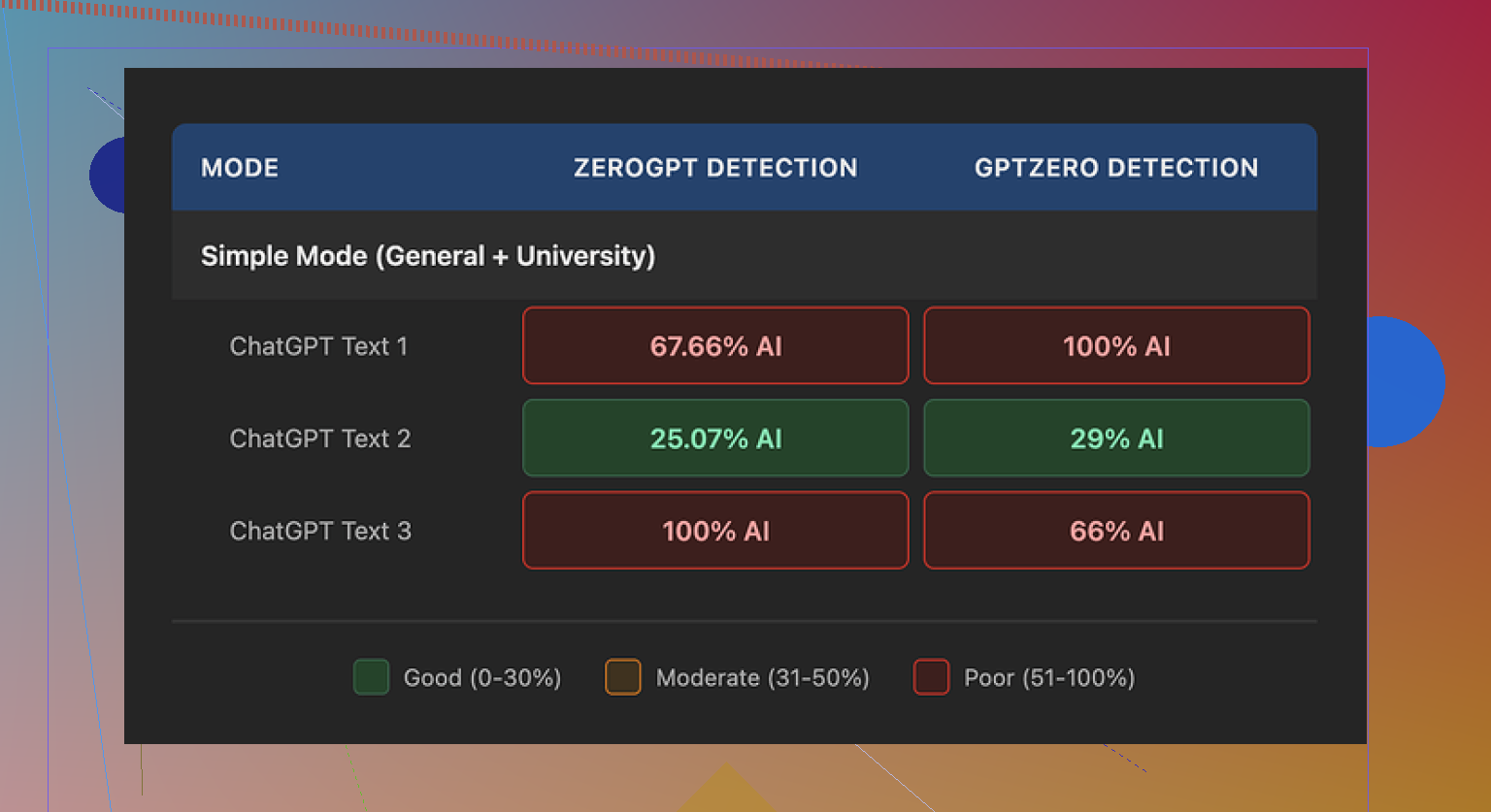

I ran three separate samples from Walter through GPTZero and ZeroGPT.

One sample did surprisingly well:

- GPTZero: 29 percent AI

- ZeroGPT: 25 percent AI

For context, that is already better than most free “humanizer” tools I have tested. Most of them light up like a Christmas tree on at least one detector.

Then it fell apart:

- The other two samples both hit 100 percent AI on at least one detector.

So, same tool, same settings, different prompts, and the scores swing from “eh, passable” to “this is clearly AI” in a couple of runs. That inconsistency is the main issue I had.

Here is one of the detector screenshots from the tests:

Writing quirks that kept repeating

Detectors aside, the text itself looked odd to me in a few clear ways:

-

Weird semicolon obsession

It kept dropping semicolons in spots where a normal person would put a comma or just split things into two sentences. Not once or twice, but so often it started to look like a tell. -

Repeating “today”

In one sample, the word “today” showed up four times in three sentences. No normal writer does that without noticing. It read like something trying to sound current and failing. -

Parentheses spam

Another pattern was the heavy use of parenthetical examples over and over, like:- “(e.g., storms, droughts)”

- similar structures repeated across the output

That format screams “template.” Humans use examples, sure, but this locked into the same pattern in multiple spots.

Once you see these habits, you start to recognize the same fingerprint across the samples.

Pricing and limits

This is where I started to lose interest.

Here is what the pricing looked like at the time I checked:

-

Starter plan:

- From $8 per month on an annual plan

- 30,000 words included

-

Unlimited tier:

- From $26 per month

- “Unlimited” in total volume, but each individual submission is capped at 2,000 words

-

Free tier:

- 300 words total

- That is not 300 per day, it is 300 in total, then you hit the wall

So if you write long-form content, those 2,000 word caps per submission will get annoying fast. You would be constantly chopping pieces of text and reassembling them.

Refund and data policy issues

Two things in their policies made me pause.

-

Refund policy

The refund wording felt hostile. It included strong chargeback language and even threats of legal action around disputes. I am used to SaaS tools saying “no refunds” or “limited refunds.” Threatening people over chargebacks in the policy text felt off. -

Data handling

I went looking for a clear statement on how long they store user-submitted text or how they handle it behind the scenes. It stayed vague. No precise retention window, no straight, detailed explanation. For a tool where you paste in content you might care about, that is not ideal.

What I ended up using instead

While testing humanizers in general, I kept coming back to Clever AI Humanizer. I pushed similar prompts through it and the output usually read closer to something I would write myself, with fewer obvious AI patterns.

Key points from my tests with Clever AI Humanizer:

- No payment needed to use it for regular volumes.

- Text looked more natural across multiple detectors and by eye.

- It did not lean on the same strange punctuation habits.

Link here if you want to see it:

If you want walkthroughs and third-party takes, these helped me when I was sorting this stuff out:

-

Humanize AI tutorial on Reddit

https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/ -

Clever AI Humanizer review on Reddit

https://www.reddit.com/r/DataRecoveryHelp/comments/1ptugsf/clever_ai_humanizer_review/ -

YouTube video review of Clever AI Humanizer

https://www.youtube.com/watch?v=G0ivTfXt_-Y

Where I landed on Walter Writes AI

If you are on the free tier with Simple mode only, expect:

- Some runs that slip past detectors decently well.

- Other runs that get marked 100 percent AI.

- Text with noticeable patterns in punctuation and phrasing.

If you pay for the higher modes, your mileage might differ, but given the pricing, per-submission caps, refund language, and unclear data retention, I stuck with Clever AI Humanizer for my own stuff.

Short version from my tests: Walter Writes AI is “okay, but touchy,” and not something I’d trust for anything important.

I had a paid month, used it for review-style content and some longer blog sections, and my take lines up with what @mikeappsreviewer saw, but with a few differences.

- Accuracy of the “humanization” and reviews

- Output sometimes passed GPTZero / ZeroGPT in small chunks, then got flagged hard on longer pieces.

- The “Standard” and “Enhanced” modes helped a bit, but the pattern stayed. Once you go past 800 to 1,000 words, the text starts looking samey.

- It loves certain phrases and sentence rhythms. After a week I could spot Walter text without any detector. That is not great if your goal is to fly under radar.

For “AI reviews” specifically, I saw:

- Overly neutral tone, even when the source content had strong pros or cons.

- Repeated sentence templates like “Overall, this tool is a solid choice for users who…” on multiple products.

- Weak factual grounding. It sometimes made confident claims that were not in the source material or even wrong for that niche.

- Usefulness day to day

Good for:

- Quick drafts of generic product review sections.

- Filler paragraphs like “who is this for”, “pros and cons”, “final thoughts” type stuff.

Not good for:

- Anything where voice matters.

- Topics where accuracy or nuance matters.

- Long form that needs consistency across sections. You end up editing so much you lose any time savings.

-

Pricing and friction

I agree with @mikeappsreviewer on the annoyance factor, but I did not find the 2,000 word cap as bad because I usually work in sections anyway. If you write long single-pass articles, you will hate chopping text up.

The refund and chargeback wording turned me off too. I did not have an issue, but reading that kind of language in a SaaS TOS is a red flag for me. -

Data and trust

The vague data retention policy is the main reason I stopped pasting client stuff there. If you do any work with sensitive drafts, I would avoid it. For low-stakes niche sites or throwaway content, it is less of a concern, but still not ideal. -

Alternatives

If your real goal is “text that reads more human and triggers fewer detectors,” I get better results with Clever AI Humanizer.

- Feels closer to how I or other freelancers write.

- Less obvious quirks in punctuation and word choice.

- Did not force me into a tight paywall early.

I still hand edit everything, but Clever AI Humanizer gets me to a cleaner starting point than Walter did.

Verdict from my side:

- Walter Writes AI Reviews is not a scam, but it is not some magic review writer either.

- It is usable for low-stakes, generic review sites if you are okay with heavy editing and some detection risk.

- For anything serious, or if you care about your long term brand voice, you will spend too much time fixing it.

Short version: it’s… fine, but way more hype than substance.

I’m pretty aligned with @mikeappsreviewer and @sterrenkijker on the “not a scam, but not magic” verdict, with a couple of nuances from my side:

1. How “accurate” are the reviews?

For review-style content, it tends to:

- Flatten everything into the same middle‑of‑the‑road tone

- Reuse structures like “Overall, this tool is a solid option for…” until your eyes bleed

- Sprinkle in generic pros/cons that sound right but aren’t always grounded in the actual product

If you’re hoping for something that really reflects hands‑on experience with a product, nah. You still have to inject your own specifics or you’ll end up with 20 pages that read like thin affiliate fluff.

I did not see it hallucinate super wild stuff all the time, but it absolutely invented “features” or “typical use cases” that weren’t in the source notes. It’s basically decent filler, not a trustworthy reviewer.

2. Detector / “humanization” side

Slightly different take from the others: I don’t think the detector inconsistency is only Walter’s fault. AI detectors themselves are pretty unreliable. So:

- Walter being flagged on long pieces didn’t shock me

- What did bug me was how recognizable its “voice” becomes once you’ve used it for a week: odd punctuation habits, repeated transitions, and that generic “content farm” rhythm

So yes, sometimes it passes GPTZero/ZeroGPT in chunks, sometimes it gets nailed, but honestly if your main goal is to “beat detectors,” that’s a losing game in general.

3. Is it worth paying for?

If your use case is:

- Low‑stakes niche sites

- Short review snippets like “pros/cons,” “who it’s for,” “final verdict”

- You’re fine with editing heavily

Then the lower tier might be “worth it” in the sense of “saves you 30–40% time on first drafts.”

If you:

- Care about unique voice or brand

- Work with client or sensitive content

- Need reliable, well‑grounded reviews

Then I don’t think it’s worth the friction, especially with that stiff refund language and unclear data retention. That policy stuff is a legit red flag for me, not just nitpicking.

4. Competitors / alternatives

Since you mentioned “AI reviews” and “humanization,” I’d look at tools that lean more into natural variation and privacy language. Clever AI Humanizer is the obvious one here. It tends to:

- Produce text that reads more like a real freelancer wrote it

- Avoid the weird punctuation/semicolons pattern Walter falls into

- Be more usable without hitting an instant paywall

You’ll still need to fact‑check and add your own product experience, but as a starting point, Clever AI Humanizer has been less annoying and more SEO‑friendly for me than Walter, especially when you’re churning out a lot of product review content.

5. So, good tool or just marketing?

I’d put it like this:

- Not useless

- Not “the” solution

- Kind of a mid‑tier content helper with aggressive marketing

If you’re curious, try the free tier just to feel the writing style, but I wouldn’t lock myself into a paid plan unless you’ve tested it on a full real article and are ok with how much cleanup it needs.

Short answer: Walter Writes AI Reviews is “usable, but not strategic.”

Let me zoom in on a few angles that haven’t been fully covered yet.

1. What Walter is actually good at

Where I think @sterrenkijker, @espritlibre and @mikeappsreviewer are all correct:

-

Boilerplate blocks

“Who is this for,” “final verdict,” generic pros/cons. Walter is decent at these when you already know the product and just need something to reshape. -

Volume over depth

If you are churning out many low‑value, affiliate‑style pages where each tool gets a paragraph or two, Walter can fill space fast.

Where I slightly disagree:

- “Not something I’d trust for anything important” is a bit harsh.

For internal drafts, competitor comparison notes, or quick outline fleshing, it is fine, as long as you treat it like a rough sketch and not a finished review.

2. Big weaknesses that hit you over time

A few problems get more obvious once you build a whole site with it:

-

Voice collapse

Every product ends up sounding like a mid‑tier SaaS: “overall, a solid choice” syndrome. If all your reviews are Walter‑heavy, your domain ends up with a single, flat voice that looks automated. -

Pattern fatigue

The punctuation quirks that others mentioned are not just cosmetic. They make your whole content library traceable as “same source,” which is dangerous if you care about long‑term brand and manual review from platforms. -

Shaky topical depth

In more competitive niches (hosting, VPNs, finance tools), those generic Walter paragraphs look painfully thin next to human‑researched reviews. You might rank for long tail early, then get outclassed as soon as someone with real expertise shows up.

3. Detector talk and reality check

People get hung up on GPTZero and similar. I agree with @mikeappsreviewer that the detector scores are inconsistent, but the bigger issue is this:

If your content strategy depends on “fooling” detectors, you are already playing the wrong game.

What matters more:

- Does the text read like it was written by the same half‑bored copywriter 200 times in a row?

- Can a manual reviewer or client skim three pages and instantly see it is templated?

Walter makes it hard to pass that human sniff test, especially in long form.

4. Where Clever AI Humanizer fits in

If your goal is review content that feels more like a person actually typed it, Clever AI Humanizer is closer to that than Walter, at least in my experience.

Pros of Clever AI Humanizer

-

More natural rhythm

It produces sentences that vary in length and structure, which helps reviews feel less like “SEO content” and more like real commentary. -

Better for patching AI text

If you already drafted something with another model, running it through Clever AI Humanizer and then editing manually tends to reduce the obvious AI fingerprints. -

Lower initial friction

You can realistically try it on real sections of your site without immediately bumping into tiny word caps.

Cons of Clever AI Humanizer

-

Still needs human fact‑checking

It will not magically know if your review claims match the product’s real behavior. You must bring the actual experience or research. -

Can occasionally over‑casualize tone

Sometimes you get phrasing that is a bit too chatty if your brand is more formal. You have to nudge it toward your voice with edits. -

Not a “set and forget” solution

If you are hoping to push a button and have 2,000 perfect words, this is not it either. It is a better starting point, not an endpoint.

Used correctly, Clever AI Humanizer is stronger for “make this sound like a person” than for “write my whole article.”

5. How I would actually use these tools

If I were building or maintaining an AI‑assisted review site today:

-

I would use Walter only for:

- Throwaway drafts

- Quick pros/cons scaffolding

- Early outlines that I plan to heavily rewrite

-

I would use Clever AI Humanizer for:

- Cleaning up obviously AI‑ish sections

- Smoothing tone across multiple reviews so they feel written by the same human editor

- Making already‑researched copy more readable and conversational

-

I would never:

- Paste sensitive client material into Walter, given the vague data handling others flagged

- Let either tool publish text without a human going through for accuracy, tone, and brand fit

6. Verdict in plain terms

-

Walter Writes AI Reviews:

- Not a scam, not useless

- Fine for low‑stakes filler and internal drafts

- Too generic, too “samey,” and too policy‑sketchy to anchor a serious review brand

-

Clever AI Humanizer:

- Better for making AI‑drafted reviews read more like a real person

- Still requires editing and real product knowledge

- Makes sense as a readability and “de‑robotify” layer in your workflow

If your business actually depends on trust, user loyalty, or clients, treat both as helpers, not as reviewers. The “review” is still you.