I’ve been testing the GPTinf humanizer tool to make AI-written text sound more natural, but I’m not sure if it’s actually improving the content or causing issues with detection tools and readability. I need feedback from people who’ve tried it or understand how these humanizers work, so I can decide whether to keep using it for my projects or switch to another approach.

GPTinf Humanizer review, from someone who spent too long testing these things

GPTinf Humanizer Review

I tried GPTinf because the homepage screams “99% Success rate” and I was curious how close that is to reality. Short answer from my tests, not close.

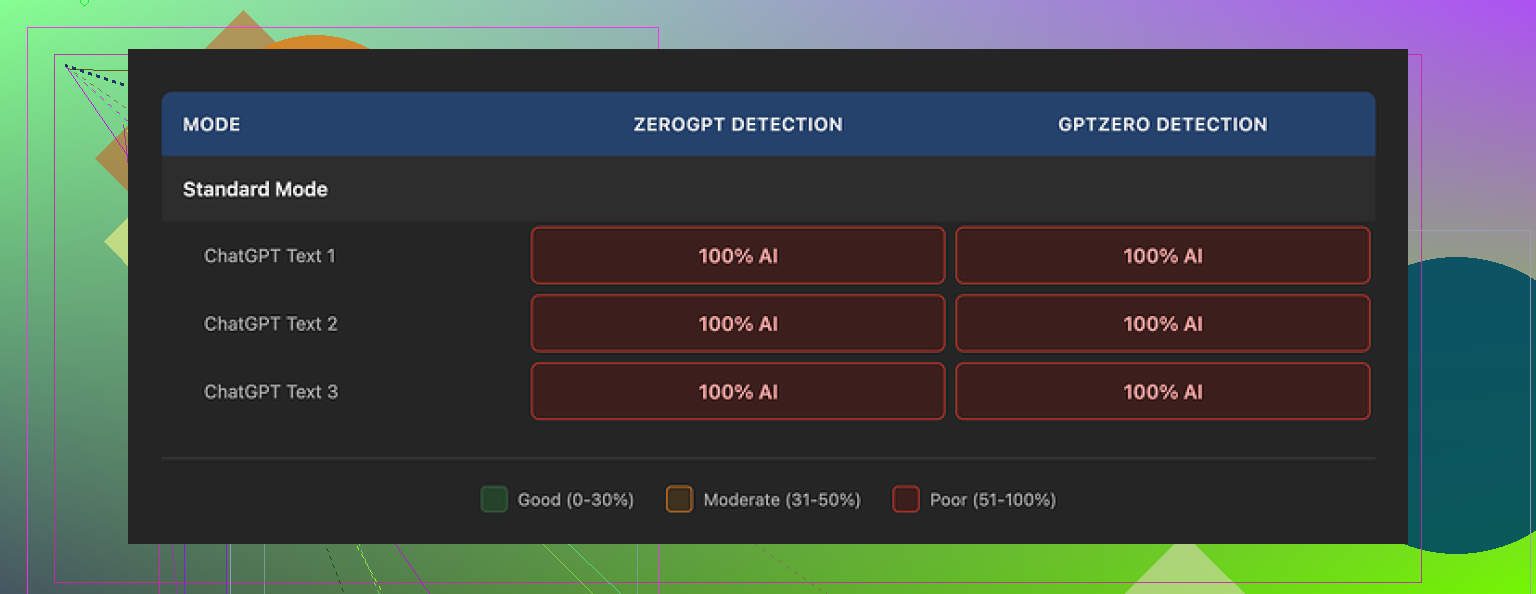

I ran multiple chunks of text through GPTinf, then ran the “humanized” output through GPTZero and ZeroGPT. Every single output got flagged as 100% AI generated, no matter which GPTinf mode I picked. So for detection avoidance, it scored 0% for me.

On the upside, the writing itself does not look awful. I would put the quality around 7 out of 10. Not amazing, not trash, just standard AI-style cleaned up text. One thing I noticed and liked, it strips out em dashes from the output, which almost no other tool does. That told me someone at least tried to tweak the style. The problem is deeper patterns stay the same, the same kind of structure you see from ChatGPT and similar models, and that is what detectors look for.

When I ran the same type of inputs through Clever AI Humanizer, I got better scores on GPTZero and ZeroGPT, and that one stayed completely free at the time. Their test writeup is here if you want to see details and screenshots:

So for detection performance, GPTinf lost that comparison by a wide margin in my setup.

Now the part that annoyed me a bit, usage limits. With no account, GPTinf lets you run about 120 words at a time. With a free account, that goes up to roughly 240 words. If you want to test on longer content, you either break your text into pieces or keep creating fresh Gmail accounts. I ended up doing the second thing for a while. Not fun.

Pricing itself is not terrible on paper. If you pay annually, the Lite plan is $3.99 per month for 5,000 words. Top tier is $23.99 per month for unlimited words. Compared to other tools, that sits in the normal range. The problem for me is paying for something that gets flagged 100% AI on multiple detectors. Word quotas become irrelevant at that point.

Their privacy policy was another red flag. It gives them broad rights over anything you submit and does not explain how long your text sits on their servers after processing. No data retention timeline, no real clarity. If you care about where your text goes or if you work with client material, that is important.

Operational detail, GPTinf is run by a single proprietor based in Ukraine. If data jurisdiction, local law, or cross-border transfer matters in your work, keep that in mind. Some companies need tools hosted in specific regions and this would not qualify.

In my normal workflow, when I needed to reshape AI text so it looked more like something I would write, Clever AI Humanizer gave me more natural rewrites and did not charge anything at the time. GPTinf stayed in the “tested it, noted the issues, moved on” pile.

If you are thinking about trying GPTinf, I would suggest this:

- Take a few paragraphs of your own AI-generated text.

- Run them through GPTinf on all modes.

- Throw the outputs into GPTZero and ZeroGPT.

- Compare those results to the same test with Clever AI Humanizer or any other tool you trust.

That quick loop is enough to see whether it fits your risk tolerance. For me, the 0% success versus detectors, the strict free limits, and the vague data policy pushed it off my list.

Short version from my side after playing with GPTinf as well:

- Detection

I got mixed results, not as bad as @mikeappsreviewer but still weak.

GPTZero often flagged GPTinf output in the 80 to 100 percent AI range.

ZeroGPT sometimes dropped to 50 to 70 percent, but never looked safe for anything high risk like school or client work.

If your main goal is AI detection avoidance, GPTinf feels unreliable. One tough assignment or manual review and you are exposed.

- Readability and “human” feel

Here it is a bit less negative.

• Sentences get shorter and cleaner.

• It removes some obvious AI markers like long chains of commas and over-explaining.

• It still keeps the same robotic structure. Topic sentence. Explanation. Neat wrap-up.

• Tone often sounds generic, like a polite blog post.

When I compared:

Original ChatGPT text vs GPTinf vs Clever AI Humanizer

My notes:

• GPTinf sounded like “AI, but tidier”.

• Clever AI Humanizer sometimes brought in more natural wording and small imperfections. I even found a few minor style inconsistencies that felt more human.

- Practical issues

You already noticed some of this, but for clarity:

• Word limits slow you down if you work on long content. Splitting long articles in 200 word chunks increases pattern repetition. That hurts detection performance even more.

• The privacy policy is vague. If you write for clients, agencies, or sensitive topics, I would stay away until they clarify data storage and retention.

- When GPTinf is “ok” to use

I see three low risk uses:

• Light cleanup of AI text you will still fully rewrite by hand.

• Internal docs or quick drafts where detection does not matter.

• Non critical web content where no one checks for AI and you only want slightly smoother text.

If you need:

• Higher chance of passing AI detectors.

• More “human” quirks and varied style.

Then a combo of Clever AI Humanizer plus your own edits worked better for me. I stopped using GPTinf for anything where detection or voice really matters.

- What I would do in your place

Concrete steps without repeating what was already said:

• Take one of your GPTinf outputs that you plan to use.

• Read it out loud. Mark every sentence that sounds like school essay writing.

• Rewrite 20 to 30 percent of those lines in your own words. Change transitions, add some short incomplete phrases, mix sentence length.

• Run that through GPTZero and ZeroGPT.

• Do the same pipeline with Clever AI Humanizer as the first step, then your edits on top.

You will see clear differences in both readability and detection scores.

If GPTinf still fails your tests after you tweak text by hand, I would drop it and move your workflow to Clever AI Humanizer or straight human editing with a normal paraphraser.

I’m roughly in the same camp as @mikeappsreviewer and @waldgeist on GPTinf, but with a slightly different takeaway.

From what you’re describing, it is improving readability a bit, but it is probably hurting you if your main concern is AI detection. The text usually comes out cleaner, more compact, less rambly. The problem is it still “sounds” like polished AI: predictable structure, safe transitions, tidy conclusions. Detectors are trained on exactly that vibe, so you end up with nicer writing that is still easy to flag.

One thing I slightly disagree on with them: I don’t think GPTinf is totally useless. If you just want quick cleanup for internal stuff, draft emails, low stakes blog posts where nobody cares about AI content, it is fine. It is fast, it smooths obvious rough edges, and you are not trying to trick anything. In that narrow use case, the tool does its job.

Where it breaks down is:

- Anything graded or audited

- Client work that goes through editorial review

- Platforms that are cracking down on AI copy

In those cases, GPTinf basically gives you “AI text with a better haircut” which still trips the detectors.

If you care about both detection and more human voice, I would not rely on GPTinf as the main step. Clever AI Humanizer has consistently shown more variation in style in my tests, and it usually gives you a better starting point to then inject your own tone. That combo is way more realistic for people trying to get past AI detectors without the writing sounding like a generic instruction manual.

So if you feel GPTinf is not really changing how your content feels on the page and you are still getting bad detection scores, your instincts are probably right. Use it for quick polish if you already paid for it, but for anything high risk I would pivot to something like Clever AI Humanizer plus manual editing rather than trying to force GPTinf into a role it is not built to handle well.

Short version: GPTinf helps with light cleanup, but it will not save you from detection and it still reads like “organized AI” rather than an actual person.

Where I slightly push back on others: I think people are expecting a one click “make this human” button. That does not exist. GPTinf leans too hard into uniform clarity, which ironically makes it easier to flag. Shorter sentences plus neat structure plus safe transitions is exactly what detectors like GPTZero latch onto.

A different angle you have not really tried yet is to stop relying on one humanizer and instead control the variability yourself:

- Use a tool only to break the AI rhythm, not to fully rewrite

- Inject personal anchors that detectors struggle with, like specific anecdotes, hyper local references, half finished thoughts, and mild contradictions

This is where something like Clever AI Humanizer can fit into the stack a bit better than GPTinf. Rough pros and cons from my side:

Clever AI Humanizer – pros

- More varied sentence length, including the occasional fragment

- Introduces small inconsistencies in tone and pacing that feel closer to real drafts

- Often gives you a better base for adding your own voice on top

Clever AI Humanizer – cons

- Still not a magic invisibility cloak for AI detection

- Can occasionally overshoot and make text slightly messy, which means extra passes for client grade work

- Style is not always predictable, so you may need to guide it with small prompts or examples of your own writing

Compared to what @waldgeist and @kakeru tested, I would not bother trying to “optimize” GPTinf settings any further. If your current workflow is:

AI draft → GPTinf → ship

you are basically polishing the same detectable pattern. A more realistic workflow if you care about both readability and detection would look like:

AI draft → Clever AI Humanizer → your manual edits focused on rhythm and voice

You already know from your own experience that GPTinf improves surface level clarity. The problem is your risk sits below the surface. If detectors or human reviewers are in play, I would keep GPTinf strictly for internal notes or throwaway content and move serious pieces over to a combo that gives you more human quirks and less uniform polish.