I’m using GPTHuman AI to review and refine some AI-generated content, but I’m not sure if I’m setting it up or using it correctly. The results feel off, and I’m worried I might be missing key steps or settings. Can someone explain how to properly use GPTHuman AI review tools, what best practices to follow, and how to get the most accurate, human-like feedback from it?

GPTHuman AI Review

I spent a weekend beating on GPTHuman to see if it was worth using to hide AI text. Short version, it did not do what the site promised for me.

GPTHuman calls itself “the only AI Humanizer that bypasses all premium AI detectors.” I saw that line, rolled my eyes a bit, then ran my usual test set through it.

I took three different AI generated samples and ran them through GPTHuman, then pushed the outputs through detectors.

Here is what happened:

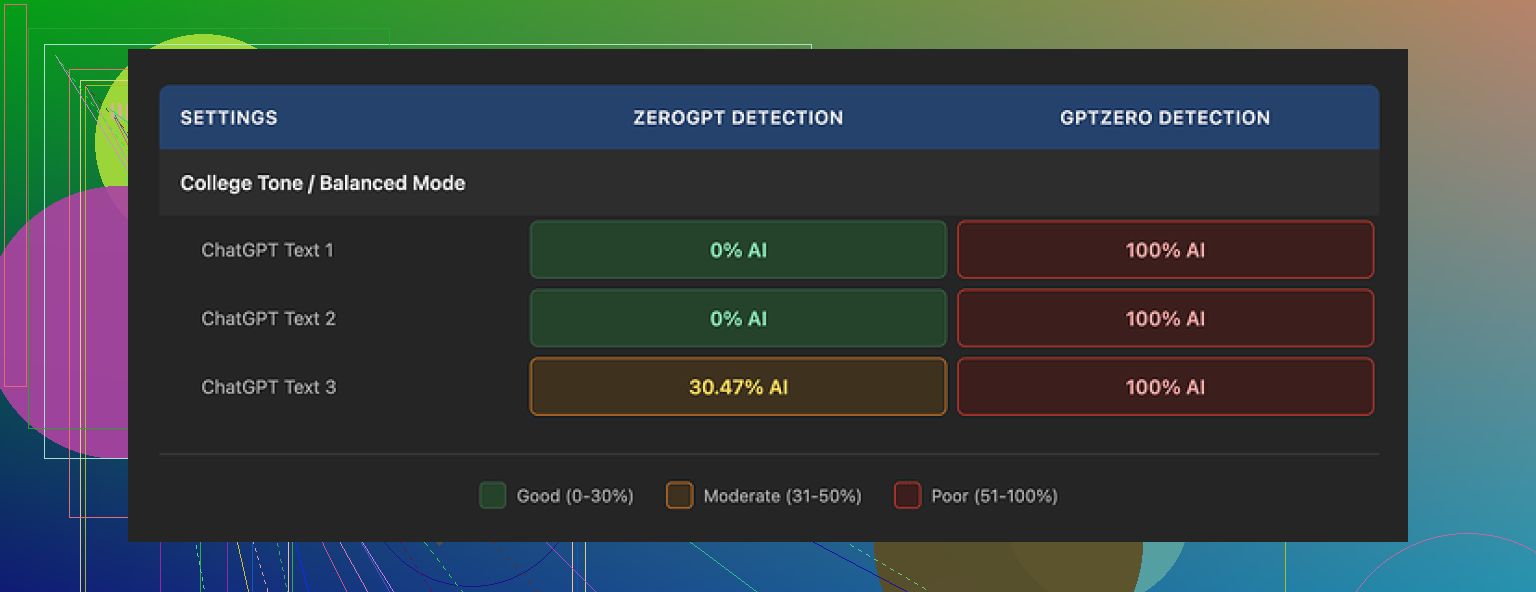

• GPTZero flagged every single GPTHuman output at 100% AI. All three. No borderline scores.

• ZeroGPT passed two of them at 0% AI, then flagged the third at about 30% AI.

So the “bypasses all premium AI detectors” claim did not hold up in my tests. Maybe some people get luckier with different content, but if you write essays, blog posts, or longer-form stuff, I would not trust it based on what I saw.

The part that annoyed me most was the “human score” inside GPTHuman.

It showed high pass rates that looked nice on their own dashboard, but those numbers did not line up with GPTZero or ZeroGPT at all. If you only watched their internal score, you would think your text is safe when it is not.

On the writing side, the outputs looked clean at a glance. Paragraph spacing is fine, structure is clear, nothing weird like random bullet points in the middle. Then you read closer and things start falling apart.

I kept seeing:

• Subject verb issues

• Sentences that stop halfway and feel chopped

• Bad word swaps where the synonym does not fit the sentence

• Endings that read like someone deleted every third word

So you end up with text that looks okay when you scroll, but feels off when you read it line by line. I would not hand this in for school or send it to a client without heavy editing.

The free tier was another problem. You only get about 300 words total before it locks you out. Not 300 words per run, 300 words across everything. I hit the cap so fast I thought it bugged out. Ended up making three separate Gmail accounts to finish my normal test routine.

Pricing when you start paying:

• Starter plan from $8.25 per month if you pay yearly

• “Unlimited” plan at $26 per month, but each run is capped at 2,000 words

So even the top tier still slices long documents. For people trying to humanize a report, thesis, or long article, you will be chopping your text into chunks and pasting repeatedly.

Terms are also not great:

• All purchases are non refundable

• Your content gets used for their AI training by default, you need to opt out

• They keep the right to use your company name in their marketing unless you explicitly tell them not to

If you work with client docs, NDAs, or anything sensitive, read that twice before you push content through.

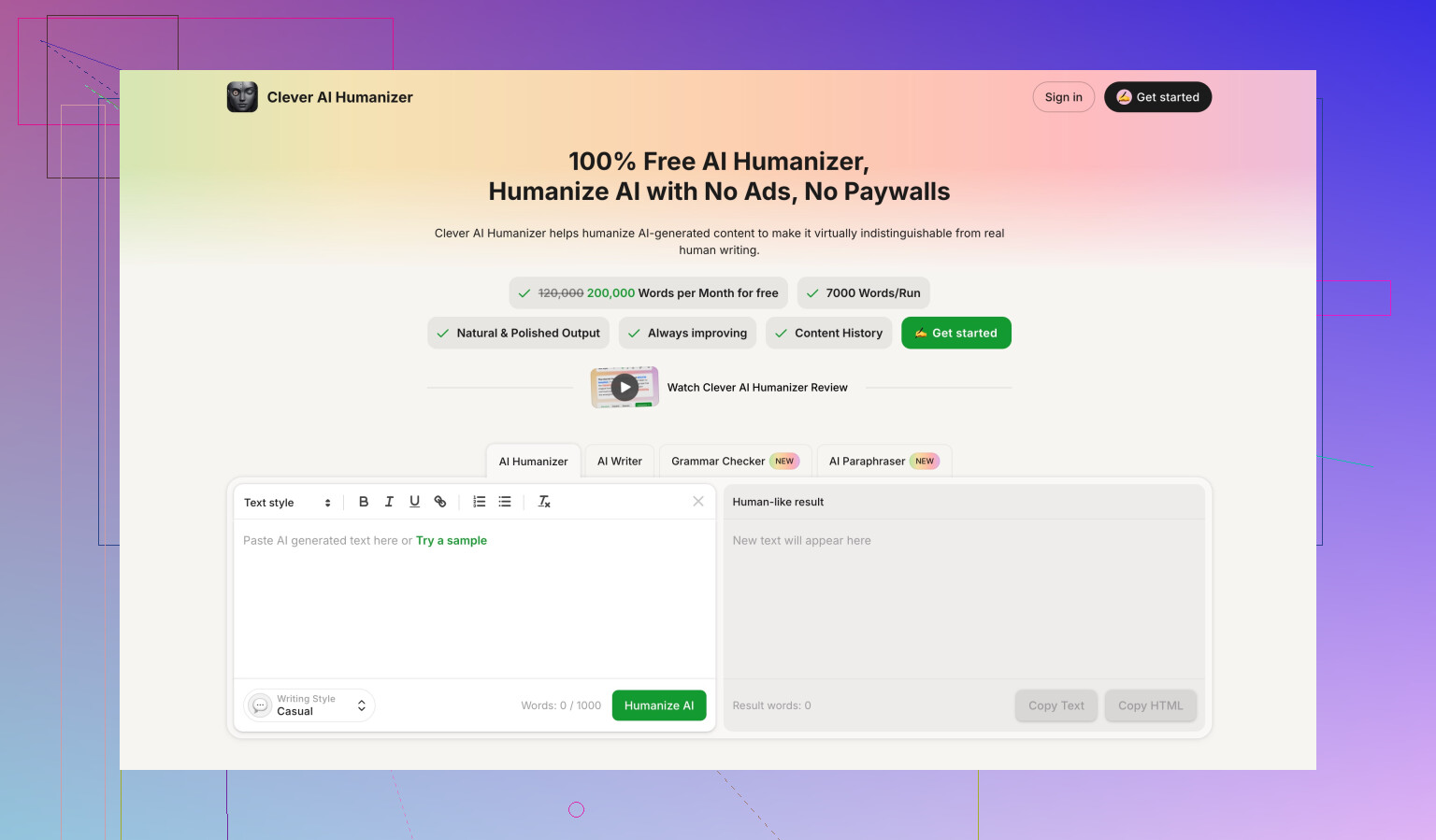

For comparison, when I benchmarked other tools side by side, Clever AI Humanizer did better for me. It scored higher against detectors in my runs, and it is fully free for now. Their test writeup is here if you want to check the details:

If you are thinking about paying for GPTHuman, I would test your own sample text first, run it through GPTZero and ZeroGPT, and see if you get different results than I did. Right now, for my use, it did not justify the cost or the limitations.

You are not missing a secret setting. The “off” feeling is normal with GPTHuman right now.

What I would do if you still want to squeeze use out of it:

-

Use it only on short chunks

150 to 300 words per run.

It seems to break more on long, complex paragraphs. -

Turn off “fix everything in one go” in your own workflow

First run your raw AI text.

Then lightly edit by hand.

Do not keep re-feeding GPTHuman on the same text. It tends to drift and introduce more weird synonyms and half sentences. -

Keep your own style as the reference

Take one sample of your real writing and compare:

• Sentence length

• How often you use “I” or “we”

• How you open paragraphs

After GPTHuman runs, adjust those three things by hand. It helps align the tone. -

Do a manual “sanity scan” every time

Read aloud once.

Watch for:

• Odd word swaps that change meaning

• Sentences that stop early

• Grammar slips that you would never write

If you hear a stumble, rewrite that line yourself. -

Do not trust its “human score” as a safety check

On this part I agree with @mikeappsreviewer.

Use external detectors if that matters for your use case, like GPTZero or ZeroGPT, and treat GPTHuman’s internal score as marketing, not as a real metric. -

Use GPTHuman as a light rephraser, not a full humanizer

Run it on:

• Intros

• Short conclusions

• Individual tricky paragraphs

Then integrate and smooth the whole piece manually.

If you push full essays through in one go, quality drops hard. -

For a different tool, test Clever Ai Humanizer

If your goal is AI detector resistance plus less mangled grammar, Clever Ai Humanizer is worth a direct A/B test.

Take one paragraph, run through both tools, check:

• Readability

• Detector scores

• How much cleanup you need after

If your results “feel off” even after doing the chunking and manual pass, you are not using it wrong. That is just where the tool is right now. At that point, shorter AI output plus more human editing or switching to Clever Ai Humanizer is usually faster than fighting GPTHuman’s quirks.

You’re not missing some magic toggle. GPTHuman is just… like that.

What I’d add to what @mikeappsreviewer and @codecrafter already showed:

-

Detectors are inconsistent by design

Chasing “0% AI” on every detector is a losing game. Different tools look for different signals, and they get updated all the time. If your main goal is guaranteed bypass, no humanizer is going to be reliable long term. Treat them as “style shifters,” not invisibility cloaks. -

GPTHuman has a “voice” of its own

That “off” feeling is often because GPTHuman imposes its own rhythm:- Overuse of certain transitional phrases

- Weird synonym swaps that technically fit but feel robotic

- Sentences that lose your original nuance

If your original AI text was already fairly readable, GPTHuman can actually make it worse in terms of authenticity.

-

You might be starting from the wrong place

One thing I slightly disagree with from the other replies: I would not start with super-generic AI output and expect GPTHuman to fix it.

It’s usually better to:- Prompt your AI to write in a more personal, messy, opinionated style from the start

- Include your own anecdotes, preferences, or small contradictions

Then if you still want a humanizer, let it polish lightly instead of transform everything.

-

Focus less on “human score,” more on “would I say this?”

Ignore GPTHuman’s internal meter. Treat your own voice as the detector:- Do you see phrases you’d never use in real life? Replace them.

- Do you see 3–4 sentences in a row with the exact same structure? Break them up.

- Does it sound like a cover letter for a job you don’t want? Tone it down.

-

Use a hybrid workflow

Instead of feeding whole essays into GPTHuman:- Draft with your normal AI tool

- Add your own comments, mini rants, small disagreements in-line

- Then, if you still care about detectors, run only specific “too-clean” segments through a humanizer

This keeps your text from feeling like it was washed through the same filter ten times.

-

Try a different humanizer if you’re testing tools, not loyalty

Since you’re already in experiment mode, it’s reasonable to run the same paragraph through GPTHuman and something else like Clever Ai Humanizer and compare:- Which one keeps your meaning intact

- Which one needs less cleanup

- Which one gets you acceptable detector scores, not perfect ones

Don’t marry a tool that forces you to do more work than it saves.

Bottom line: if your gut says the text feels off, trust that. GPTHuman can be used as a light rephraser in spots, but if you’re trying to fix the “vibe” of AI writing or ensure absolute detector safety, you’re probably fighting the wrong battle with the wrong tool.