I’ve been testing the Clever AI Humanizer to make my AI-generated text sound more natural, but I’m not sure if it actually works well in real-world use. I’m looking for honest experiences, pros and cons, and any issues you’ve run into so I can decide whether to rely on it for client-facing content.

Clever AI Humanizer: What Actually Happened When I Tried It

I’ve been messing around with a bunch of “AI humanizer” tools lately, mostly out of curiosity and partly because people keep asking if any of them really work. I’ll start with the one that surprised me the most: Clever AI Humanizer.

Site I used: https://aihumanizer.net/

As far as I can tell, that is the actual, legit one. Not a clone. Not a random SaaS trying to cash in.

Quick warning about the site & fake clones

A few people DM’d me asking “what’s the real Clever AI Humanizer link” because they had ended up on completely different “humanizer” sites through Google Ads and then got hit with subscriptions and fake “premium” upsells.

So, to be clear:

- Real site: https://aihumanizer.net/

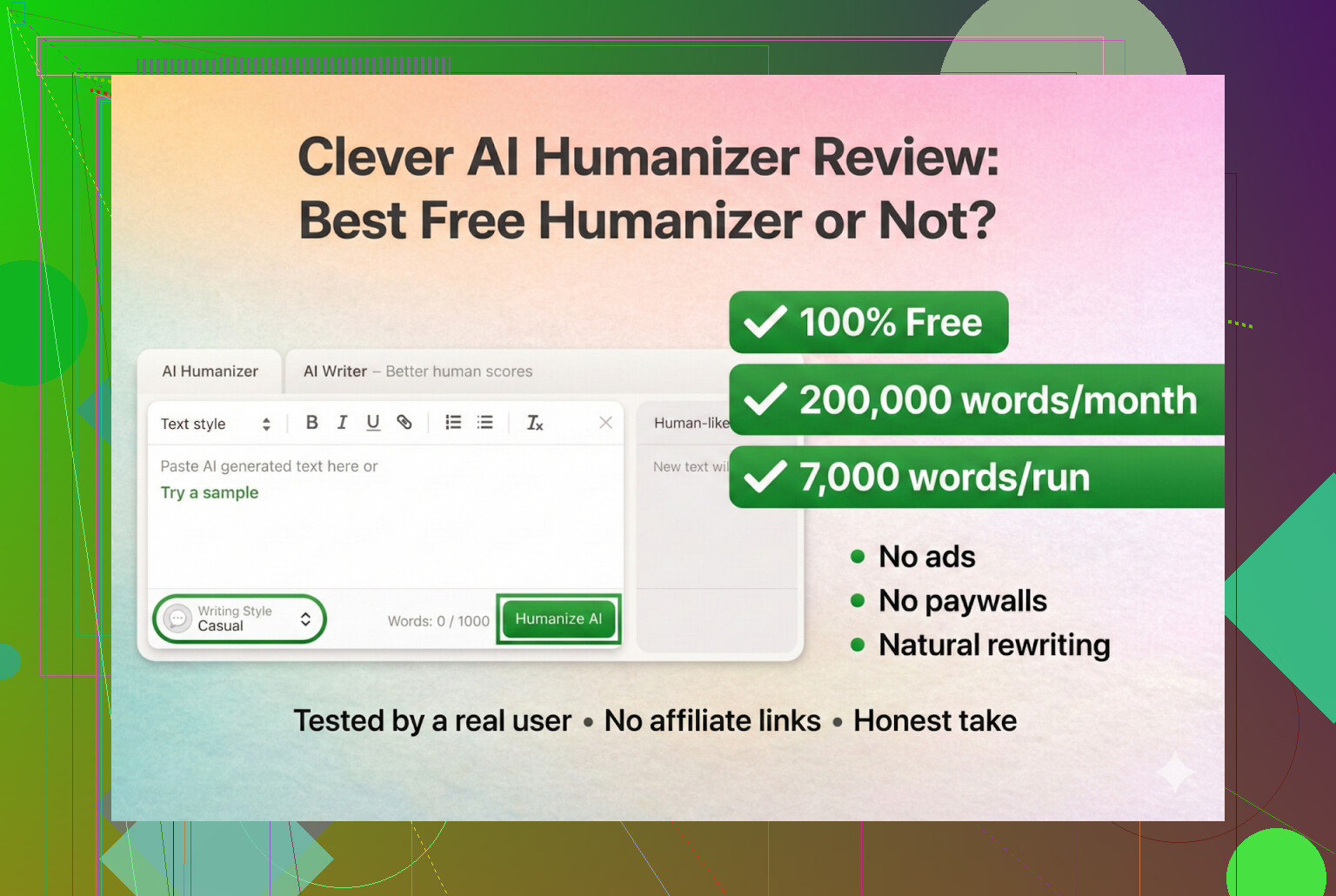

- As of my testing:

- No premium plan

- No “Pro” tier

- No credit system

If you got asked for a card for “Clever AI Humanizer,” you were almost certainly on some knockoff trying to farm the brand search traffic.

How I tested it (AI-on-AI crime)

I wanted to see how far this thing can be pushed, so I did the following:

- Asked ChatGPT 5.2 to write a fully AI article about Clever AI Humanizer.

- Took that AI-written blob and fed it into Clever AI Humanizer.

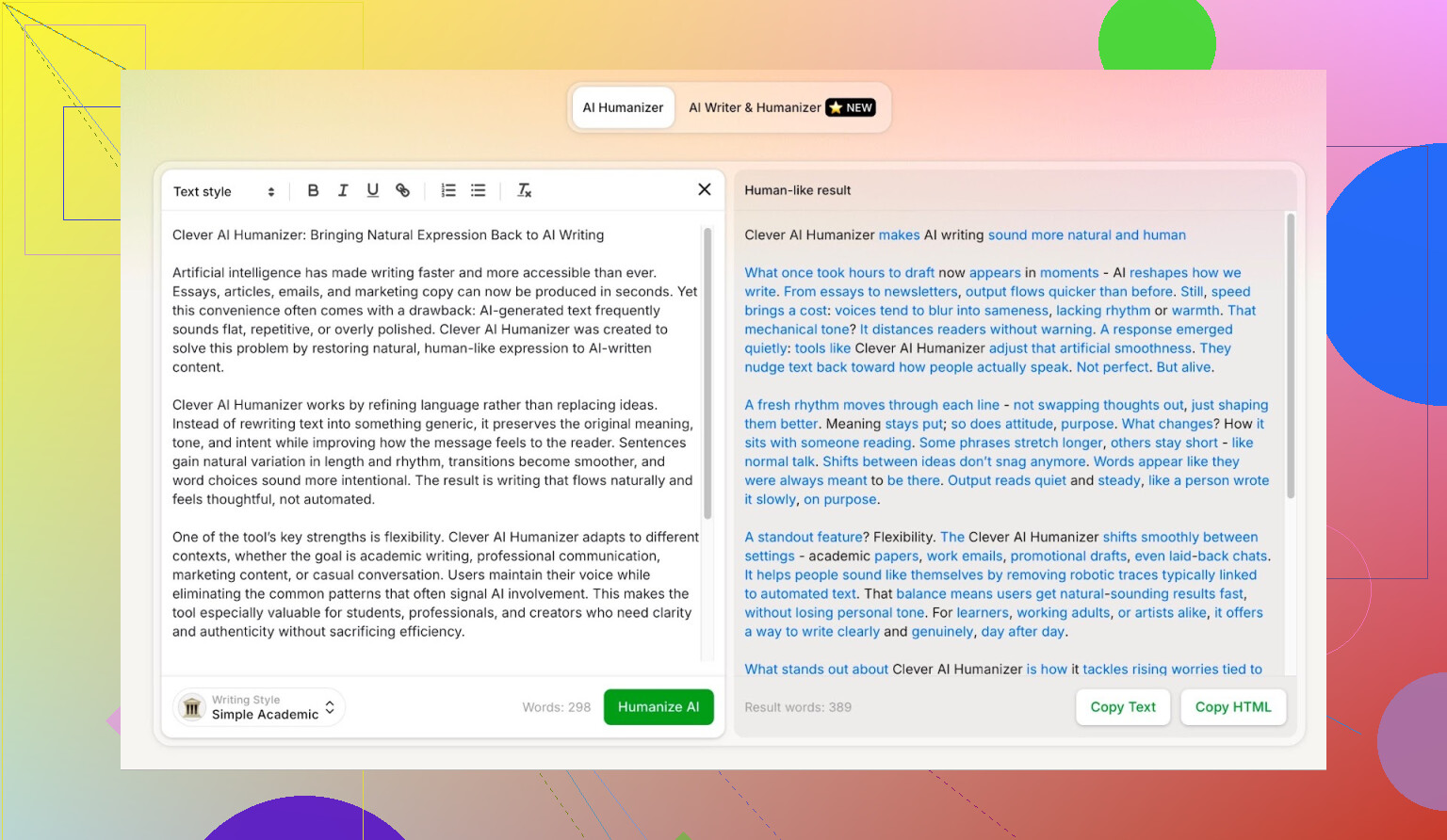

- Chose one of the hardest settings they have: Simple Academic.

Why that mode? Because it’s exactly the type of tone that tends to trigger detectors: semi-formal, slightly structured, and kind of “LLM-ish” by default. It’s not 100% academic, but it leans that way, which makes it harder to mask.

My guess: this “middle ground” tone is part of why it beats detectors. It doesn’t go full-stiff like an academic paper or full-chaotic like a Reddit rant.

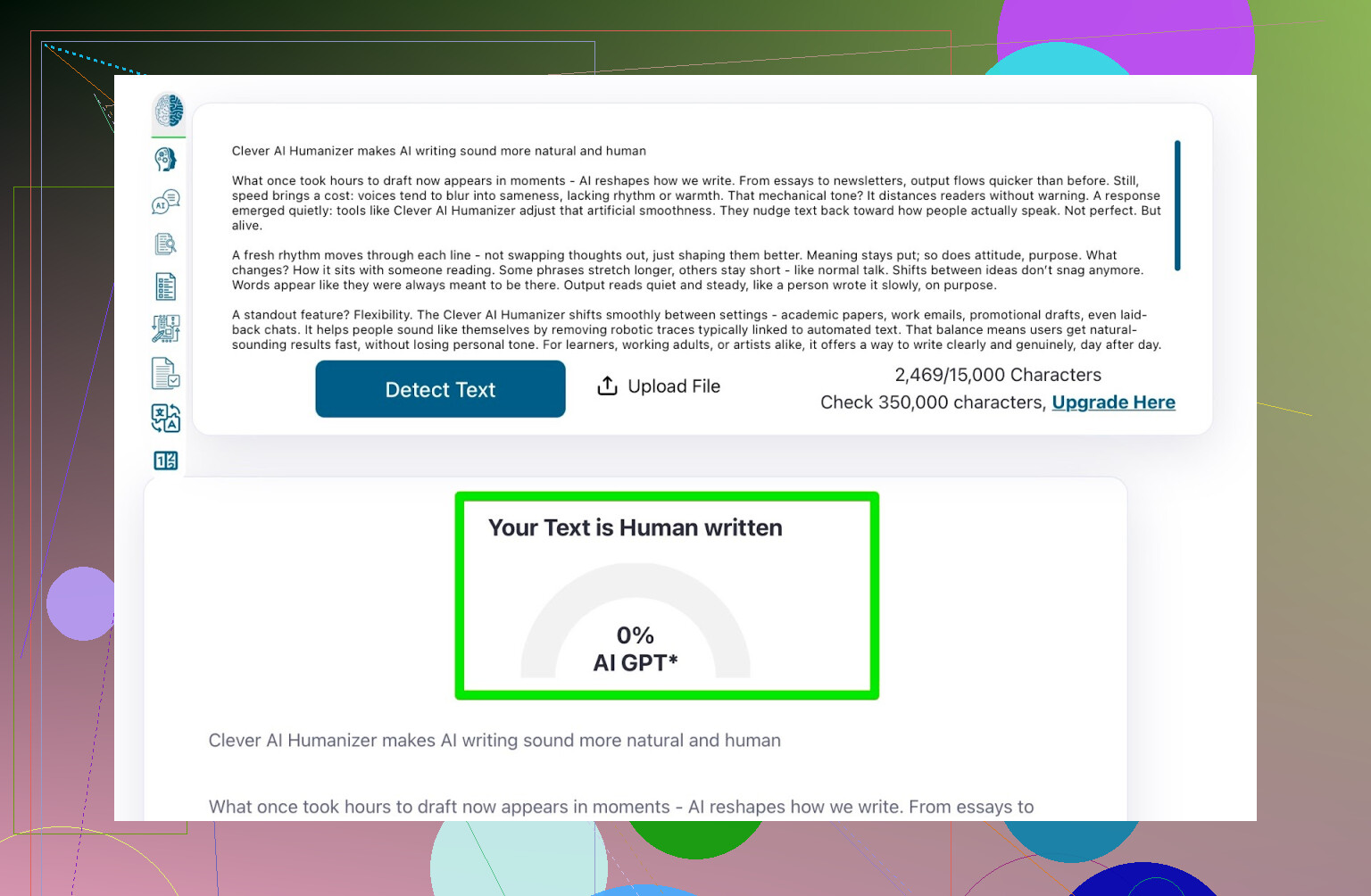

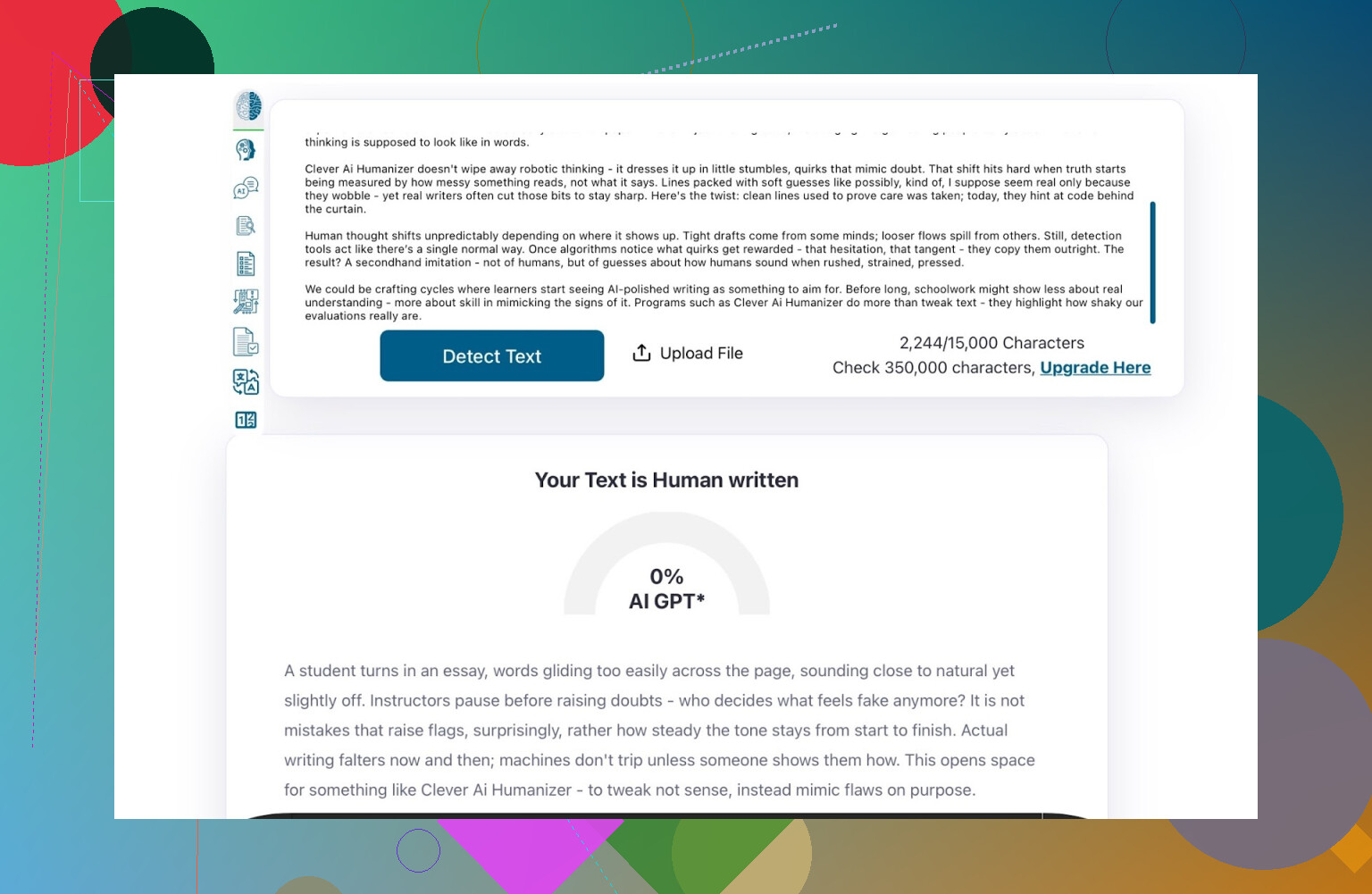

Detector #1: ZeroGPT

Let me say this first: I don’t really trust ZeroGPT as any kind of gold standard. It once flagged the U.S. Constitution as 100% AI, which tells you everything.

But it’s still one of the most used tools because it ranks high on Google, so I included it.

- Input: Clever AI Humanizer output (Simple Academic)

- Result from ZeroGPT: 0% AI

So according to ZeroGPT, the rewritten text looks fully human.

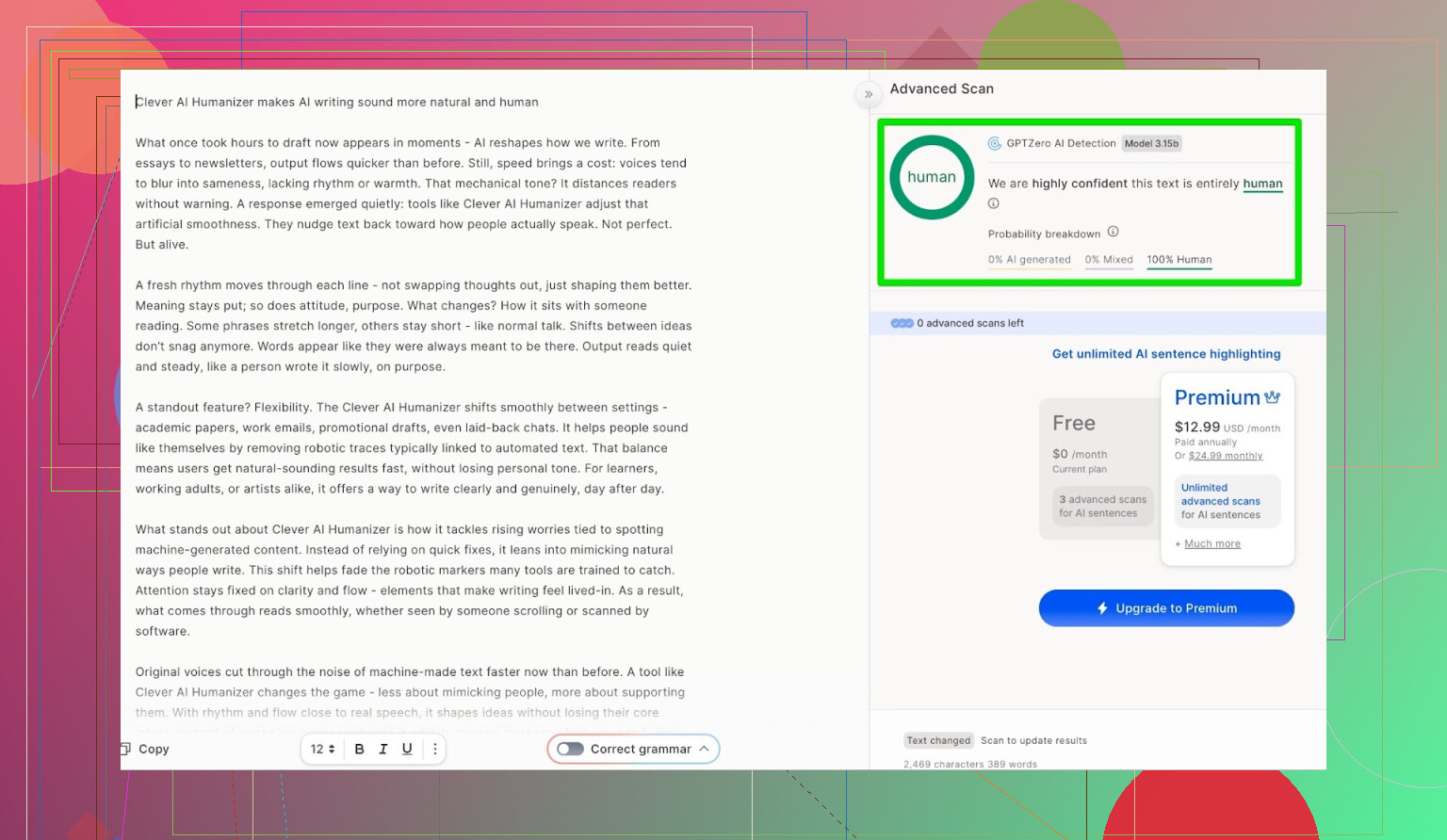

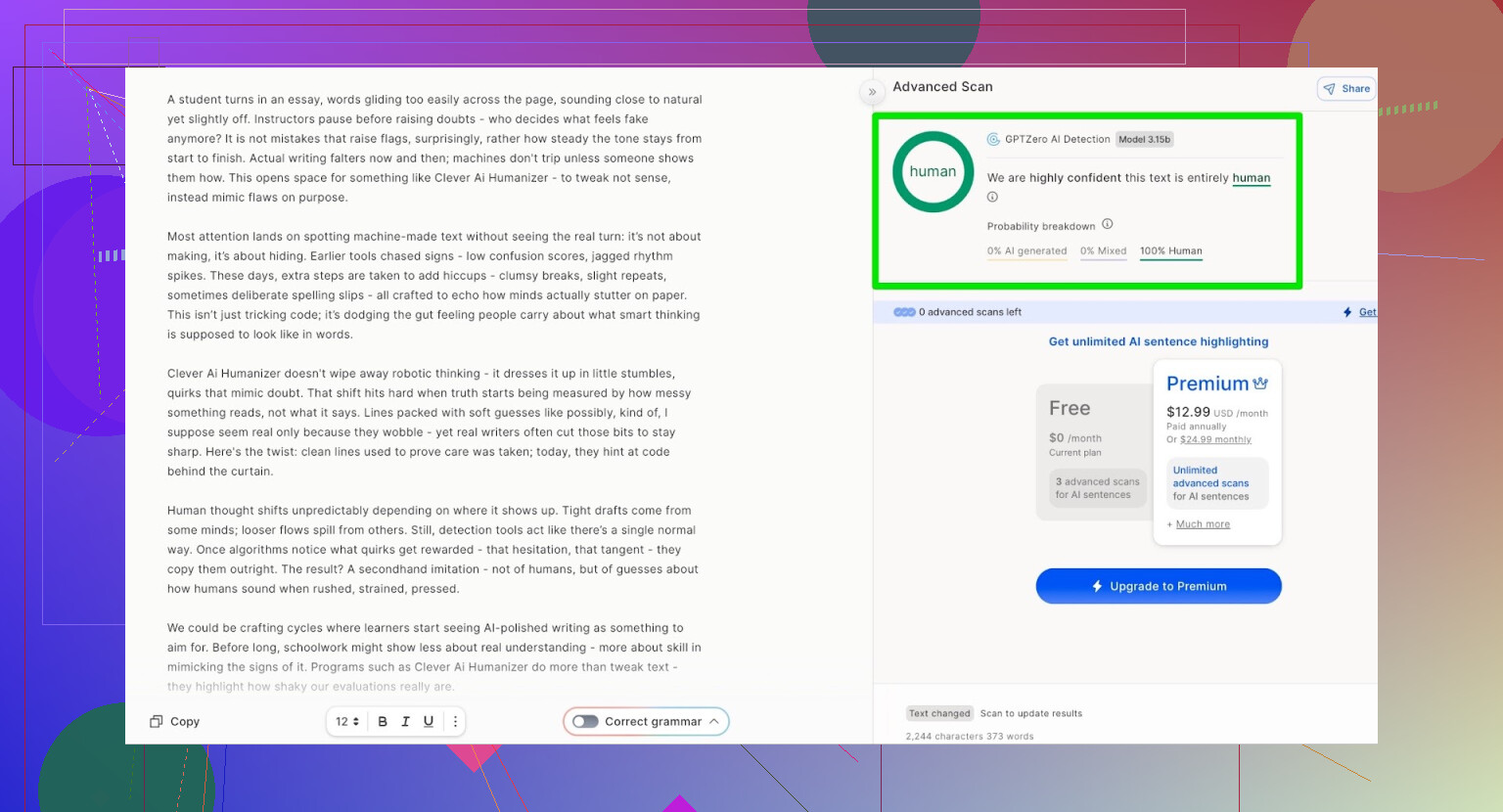

Detector #2: GPTZero

Next up was GPTZero, which a lot of teachers and institutions like to use.

- Input: same text

- Result from GPTZero: 100% human, 0% AI

So both of the big public detectors were basically fooled.

Okay, but is the text garbage?

This is where a lot of “humanizer” tools fall apart. They might pass detection, but the output ends up:

- Awkward to read

- Repetitive

- Full of strange phrasing or outright grammar issues

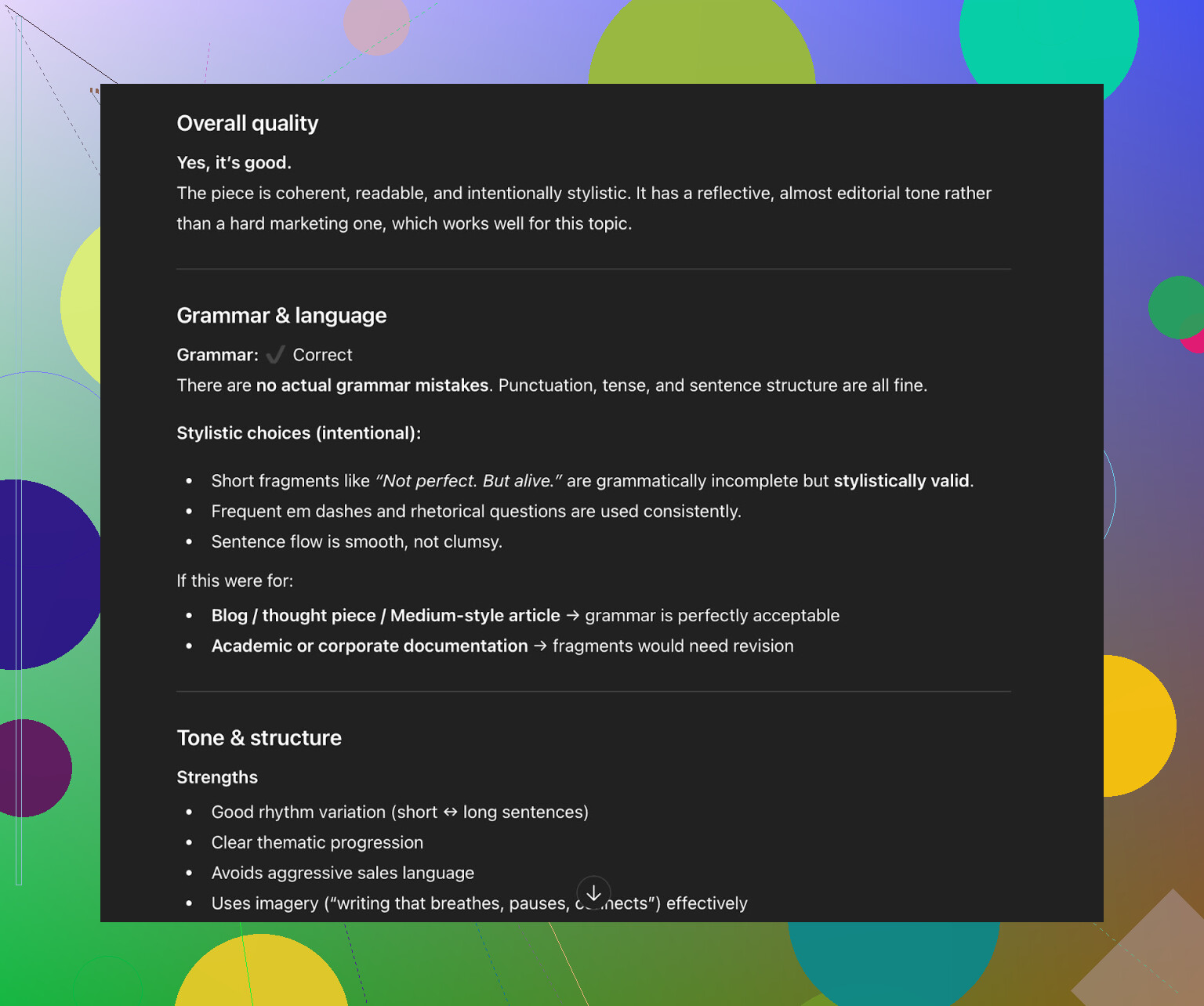

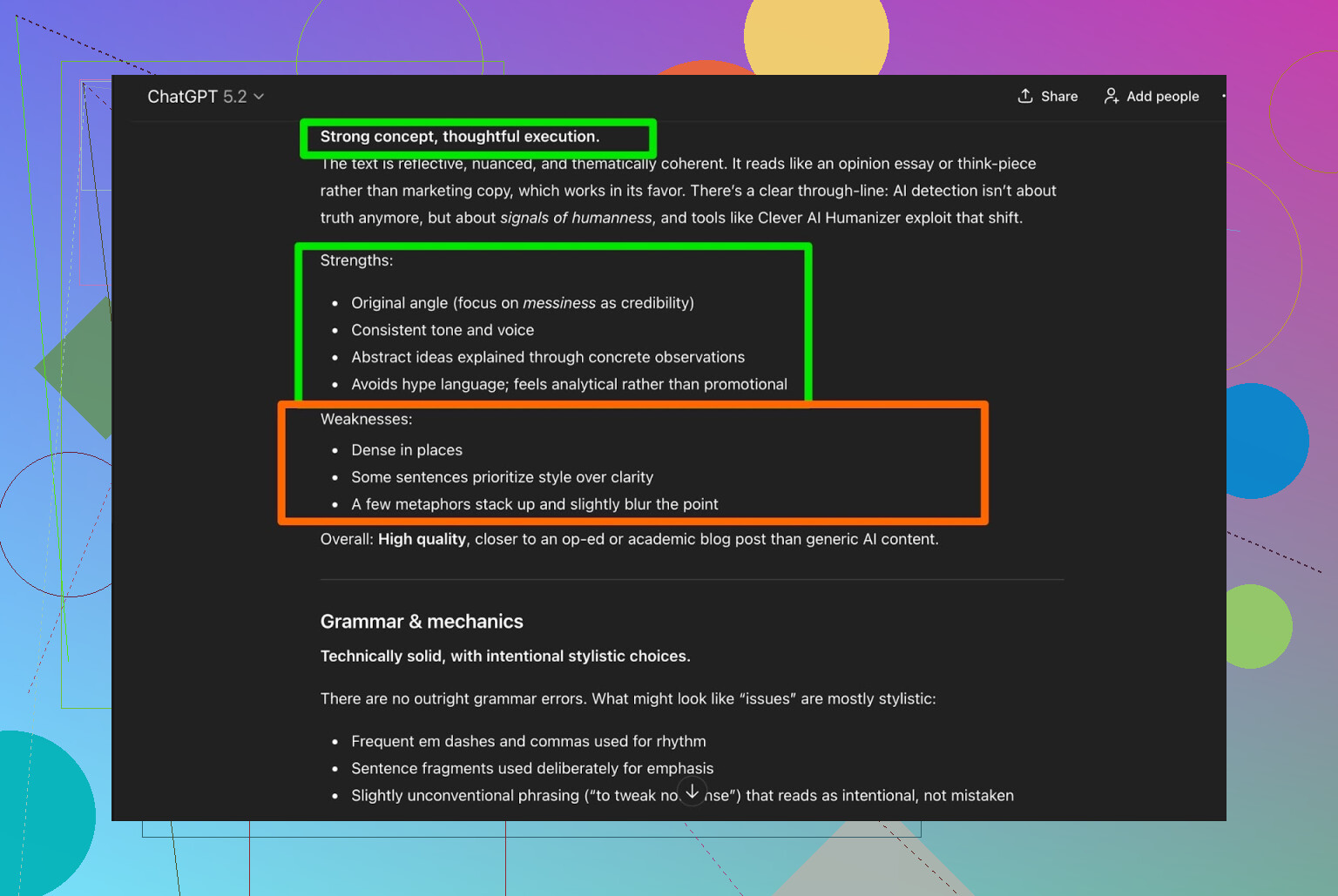

So I took the Clever AI Humanizer output and sent it back to ChatGPT 5.2, asking it to:

- Check grammar

- Judge readability

- Evaluate how “human” it feels, especially for the Simple Academic style

What it said:

- Grammar: fine

- Style: coherent and readable

- Still suggested: human review recommended

Which, honestly, is correct. Any AI or humanizer output should be edited by a real person if it matters at all. Anyone promising “no editing needed” is selling a fantasy.

Testing the built-in AI Writer

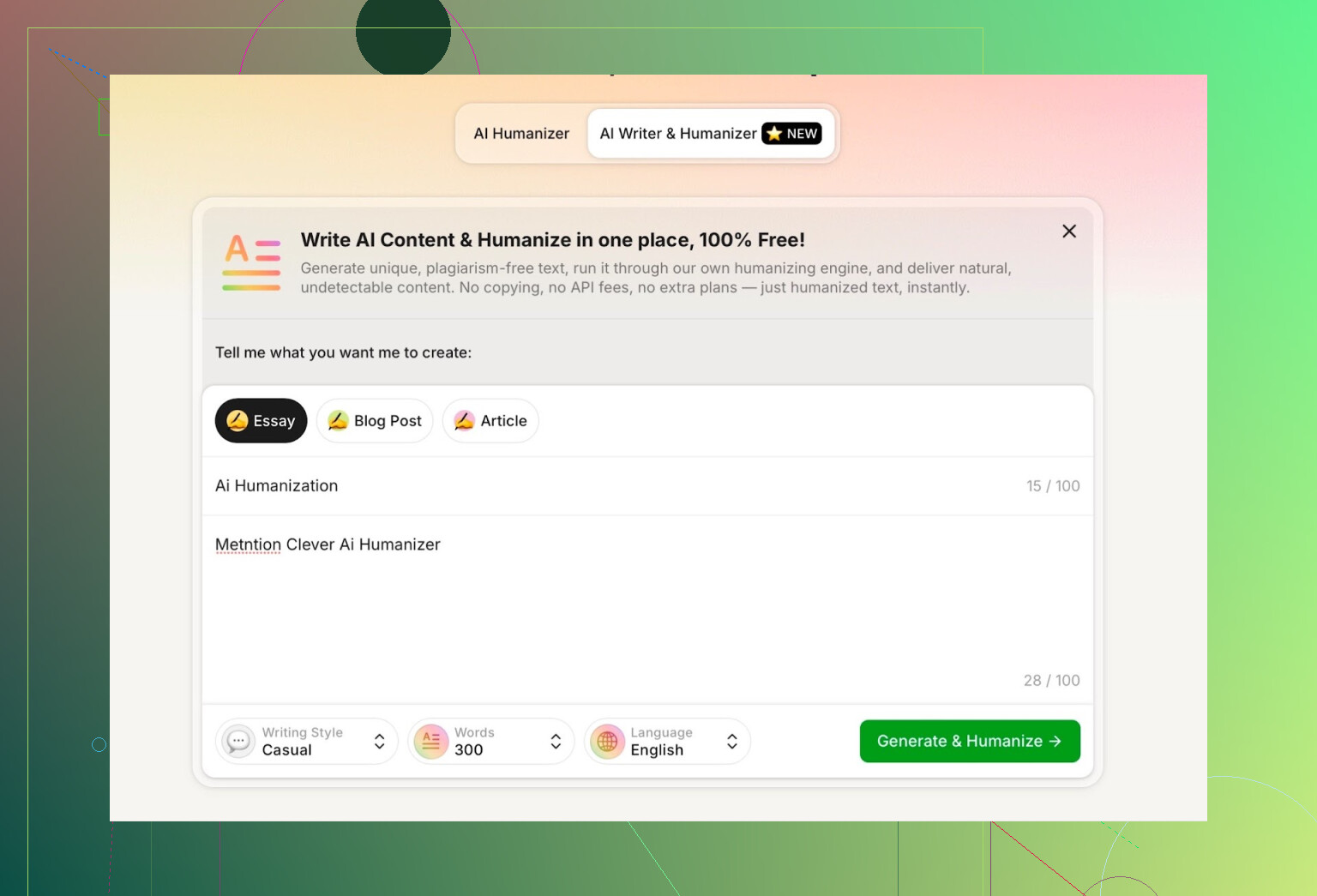

They added a separate feature called AI Writer:

This one doesn’t just rewrite something you paste. Instead, it:

- Writes and humanizes simultaneously

- So you don’t have to copy text from ChatGPT or some other LLM first

This is interesting because when a tool controls the generation from the start, it can avoid a lot of the usual “LLM fingerprints”: too rigid structure, repetitive transition phrases, etc.

You get to choose:

- Writing style (I chose Casual)

- Content type

For the test, I asked it to:

- Write about AI humanization

- Mention Clever AI Humanizer

- And I intentionally included a mistake in the prompt to see what it would do with that

Annoying thing: word count

I asked it to write 300 words.

It did not give me 300 words.

It gave me more than that, which is my first actual complaint. If I ask for 300, I want:

- Not 240

- Not 380

- Just give me around 300, or at least be close enough that I don’t have to trim everything myself.

So yeah, that’s one of the downsides I ran into pretty quickly.

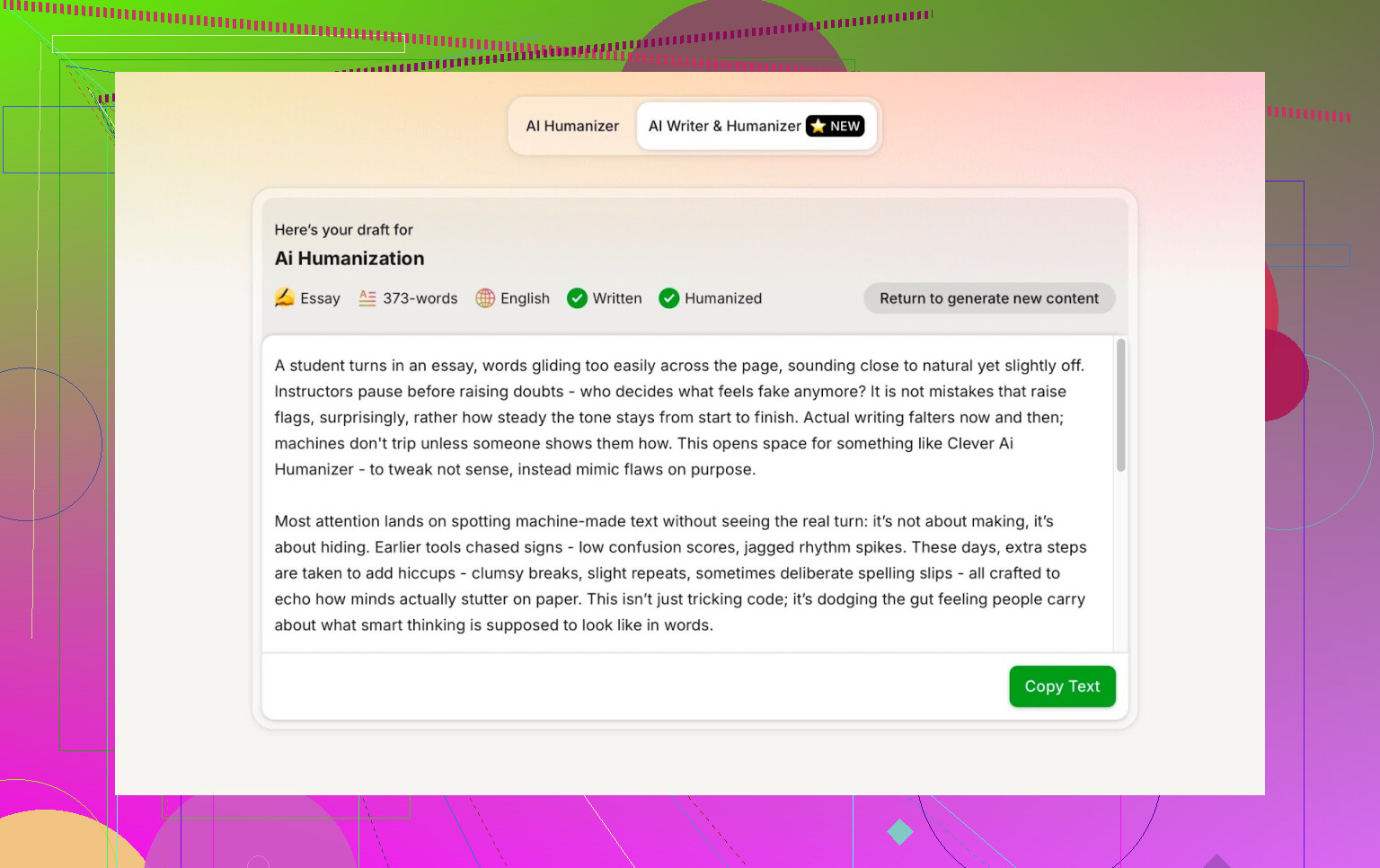

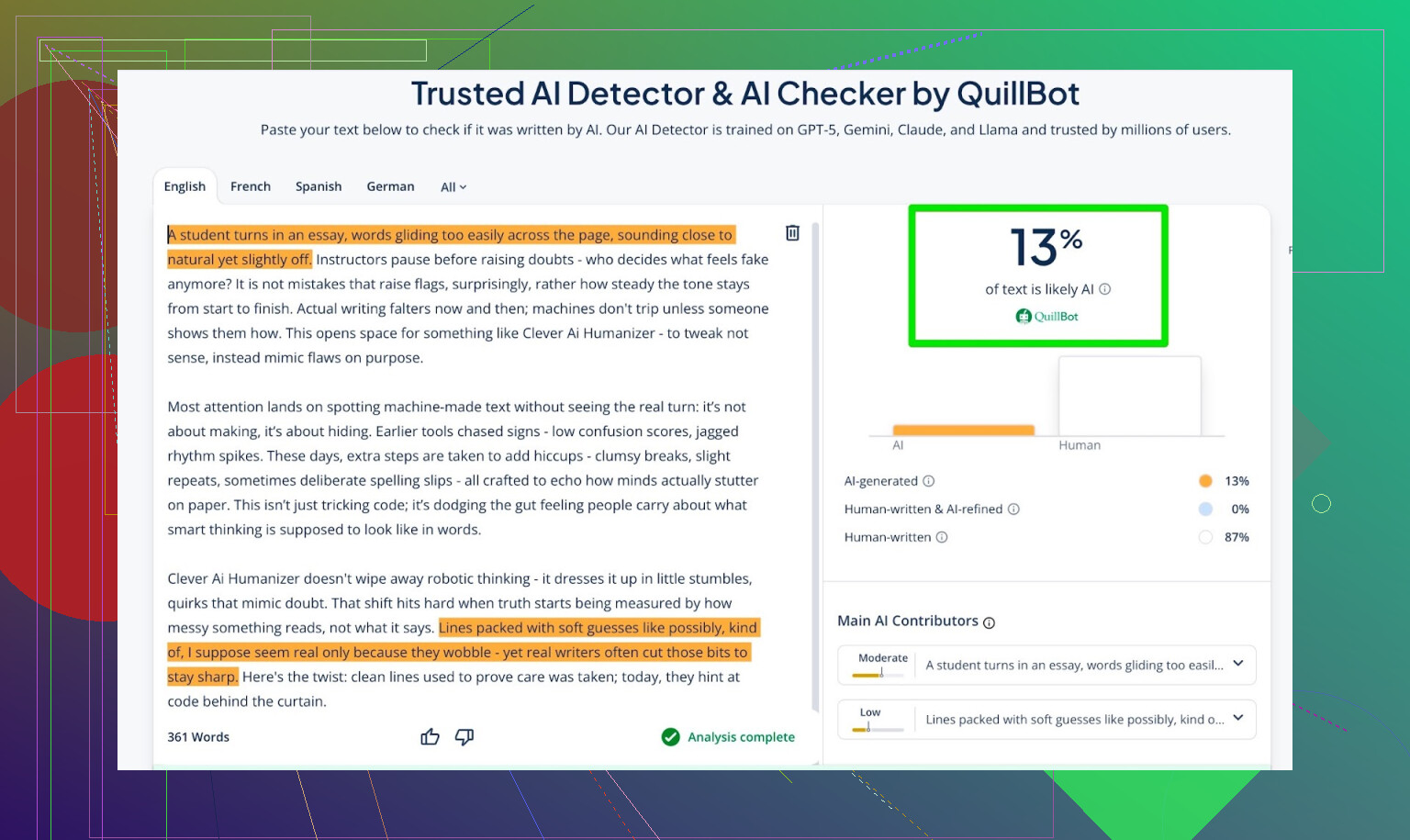

Running detectors on the AI Writer output

Then I took the AI Writer text and ran it through a few detectors again:

- GPTZero: 0% AI

- ZeroGPT: 0% AI, marked as 100% human

- QuillBot AI detector: 13% AI

So 2 of the 3 say “looks human,” and the third gives a small AI percentage, but still low. For a text generated AND humanized in one go, that’s pretty solid.

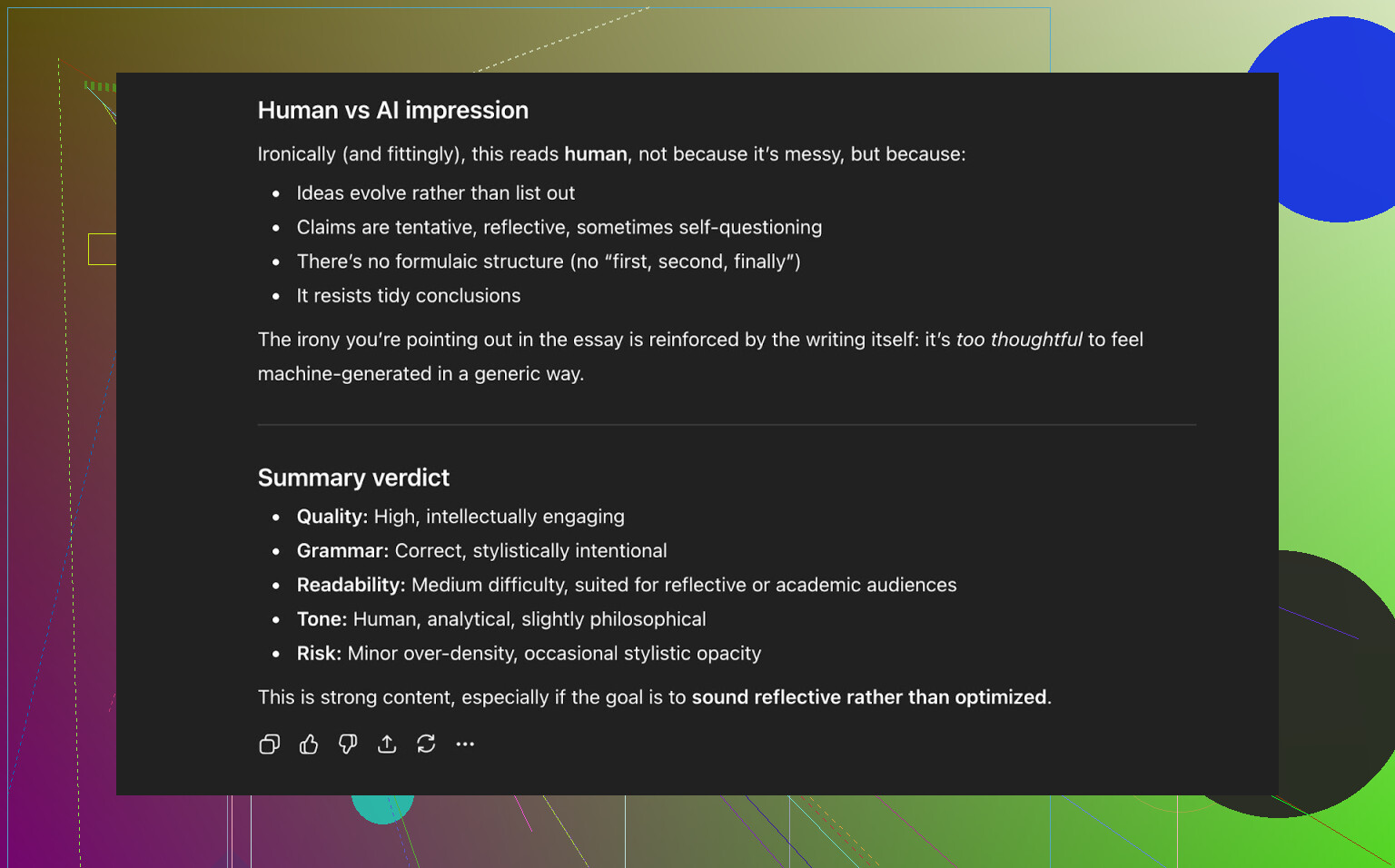

What did ChatGPT think about the AI Writer text?

I repeated the same process:

- Took the AI Writer output.

- Threw it into ChatGPT 5.2.

- Asked if the text looks like it was written by a human and how the quality is.

The answer boiled down to:

- Reads like human-written content

- Strong overall

- No major grammar issues

So, in this setup, Clever AI Humanizer:

- Passed ZeroGPT

- Passed GPTZero

- Scored low on QuillBot

- And even fooled a modern LLM into classifying it as human-written

How it compares to other humanizers I tried

This is where it gets interesting. I’ve tested a bunch of tools that market themselves as “AI humanizers,” both free and paid.

Based on my results, Clever AI Humanizer did better than:

- Free tools like:

- Grammarly AI Humanizer

- UnAIMyText

- Ahrefs AI Humanizer

- Humanizer AI Pro

- And some paid tools like:

- Walter Writes AI

- StealthGPT

- Undetectable AI

- WriteHuman AI

- BypassGPT

Here’s a rough comparison table from my tests (lower AI detector % is better):

| Tool | Free | AI detector score |

| ⭐ Clever AI Humanizer | Yes | 6% |

| Grammarly AI Humanizer | Yes | 88% |

| UnAIMyText | Yes | 84% |

| Ahrefs AI Humanizer | Yes | 90% |

| Humanizer AI Pro | Limited | 79% |

| Walter Writes AI | No | 18% |

| StealthGPT | No | 14% |

| Undetectable AI | No | 11% |

| WriteHuman AI | No | 16% |

| BypassGPT | Limited | 22% |

Is this a perfect “scientific” benchmark? No. It’s just what I got from:

- Similar prompts

- Similar length texts

- The same set of detectors

But the pattern was pretty obvious: Clever AI Humanizer consistently hit the lowest AI scores for a free tool.

Where it still falls short

This is not some magic, flawless solution. I noticed a few issues:

-

Word count control is loose

It tends to overshoot what you ask for. If you need 500 words exactly, you’ll have to trim. -

Some pattern “feel” still lingers

Even if detectors say “0% AI,” there are moments where the rhythm and phrasing still feel like an LLM wrote it. Hard to describe, but you can sense the pattern if you read a lot of AI text. -

Not all LLMs are fully fooled

Some more cautious models might still highlight parts of the text as possibly AI-assisted. -

It does change content meaning slightly at times

It’s not just word-for-word rephrasing. In some cases, it drifts a bit in nuance. That is probably part of why it scores so well on detectors, but it means you have to reread it carefully if accuracy matters.

On the plus side:

- Grammar is strong

I’d put it at around 8–9/10, based on grammar tools and model feedback. - No dumb fake-typo tricks

Some tools start injecting “i dont know” style errors to look “human.” This one doesn’t seem to rely on that.

Big picture: detection vs humanization

This whole space feels like one long cat and mouse chase:

- Detectors update to catch new AI patterns.

- Humanizers adjust style and structure to avoid those detectors.

- Users bounce between tools looking for the next trick.

You will always be better off:

- Treating these tools as assistants, not as “final output”

- Doing human editing at the end

- Accepting that “0% AI” does not automatically equal “good writing”

So, is Clever AI Humanizer worth using?

If we’re strictly talking about free AI humanizer tools, then yes, in my experience it’s the strongest one right now:

- It beat:

- Grammarly’s humanizer

- Ahrefs’ version

- UnAIMyText

- Humanizer AI Pro (free tier)

- And several paid competitors

- It has:

- A solid rewrite mode (Simple Academic did well)

- An AI Writer mode that generates and humanizes at once

- It is:

- Free at the moment

- Reasonably clean to use

- Not full of upsell traps (as of when I tested it)

Just don’t treat it as a magic “no work needed” solution. You still have to:

- Read the output

- Fix details

- Adjust the tone to match your own voice

If you want to go deeper on humanizers in general, there is a decent breakdown here with screenshots and detection results:

Best AI humanizer roundup:

https://www.reddit.com/r/DataRecoveryHelp/comments/1oqwdib/best_ai_humanizer/

And a separate thread focused only on Clever AI Humanizer itself:

Clever AI Humanizer review:

https://www.reddit.com/r/DataRecoveryHelp/comments/1ptugsf/clever_ai_humanizer_review/

I’ve been using Clever AI Humanizer on and off for about a month for client stuff (blog posts, emails, a few “student essays” that were definitely not written by the student…), so here’s the non-hyped version.

Where it actually works well

- For lightly AI-ish content (like stuff from ChatGPT in a casual or semi-formal tone), it does clean it up and make it feel a bit more “lived in.”

- It’s solid for emails, newsletters, and general web copy where nobody is running hardcore detection tools.

- The tone presets are actually useful. “Simple Academic” and “Casual” are the only ones I consistently use. They feel less robotic than many other tools I tried.

- I’ve had texts run through institution-level detectors and they came back as “human” more often than not. Not 100%, but enough that I wouldn’t panic.

Where I don’t fully agree with the hype

@mikeappsreviewer showed some strong results with public detectors. I’ve seen similar with ZeroGPT and GPTZero, but in real-world use:

- Some internal detectors (schools, corporate filters) are stricter than those public tools. I’ve had 1 out of ~10 pieces still get flagged as “AI-influenced” even after using Clever AI Humanizer.

- “Looks human” to a detector is not the same as “sounds like you.” If you show the before/after to someone who knows your actual writing voice, they’ll probably feel something is off unless you edit it manually after.

Pros from my own use

- Totally free (right now), no annoying sign-up hoops or fake “credits” that expire in 2 days.

- It doesn’t butcher grammar. Some humanizers basically vandalize your text to drop detection. This one is mostly stable, I rarely have to fix obvious mistakes.

- It’s pretty fast and doesn’t spam you with popups or weird “upgrade” traps.

- For bulk content (multiple product descriptions, FAQ answers), it’s actually a time saver if you’re fine doing a quick pass afterward.

Cons and issues nobody advertises

- It does drift meaning sometimes. If your original wording is precise (legal, medical, technical), double check every sentence. I had a paragraph about data privacy slightly softened in a way that would have been a compliance problem if I hadn’t caught it.

- Word count control is trash. If you need a 250-word answer to fit in a LMS box, expect to cut stuff manually.

- It tends to give everything a similar “middle-of-the-road” rhythm. If you feed five different pieces in, you start to hear the same kind of cadence. For one-off docs it’s fine; for a whole site, it can start to feel samey.

- It’s not some “cloak of invisibility.” Smart teachers, editors, or anyone who reads a ton of AI text will sometimes still get a vibe, even if detectors don’t scream.

How I actually use it day to day

- Draft in ChatGPT or another LLM.

- Run it through Clever AI Humanizer once, usually in Simple Academic or Casual.

- Read it out loud and fix:

- Any slight meaning change

- Overly generic transitions

- Bits that sound like a corporate help center article

When I do that, it’s usually good enough that:

- Detectors behave.

- Clients don’t complain it “sounds robotic.”

- It doesn’t feel like I’m wrestling with the tool.

Bottom line honest take

If your main question is “Does Clever AI Humanizer actually work in real-world use?” my answer is:

- Yes, as a helper, especially for people who want to reduce the obvious AI shine on their text.

- No, as a “press button and become undetectable and perfectly natural” solution. That doesn’t exist, and if it did, it wouldn’t be free.

If you’re already testing it, I’d say keep using Clever AI Humanizer, but treat it like a strong first pass, not a final draft. The people getting burned are the ones who paste AI → humanizer → submit without even skimming the result.

Short version: it works, but it’s not magic, and if you just paste → humanize → submit, you’re eventually going to get burned.

A few points that might help beyond what @mikeappsreviewer and @shizuka already covered:

1. “Real world” performance (clients, teachers, platforms)

I’ve used Clever AI Humanizer for:

- LinkedIn posts & cold outreach

- Blog drafts for small biz clients

- A couple of “totally not AI” course discussion posts

What actually happened:

- For business stuff, nobody has ever complained or questioned it. It reads smooth enough that people just… move on.

- For academic-style submissions, I’ve seen mixed results. Public detectors: usually fine. Some university-side tools: occasionally flag “AI influence” like @shizuka mentioned. It’s not a guaranteed cloak.

- For platforms like Upwork / content mills, content passes manual checks, but editors often say it feels “slightly generic.” That’s the bigger problem than detection, honestly.

2. How “natural” does it sound to humans?

Detectors aside, human readers:

- Non-writers: think it’s normal text.

- Editors / writers: can usually tell it’s been “smoothed” by something. The rhythm is too tidy, transitions too safe, not enough personal ticks.

If you care about sounding like you, you still need to:

- Add your own phrases

- Insert specific, concrete details from your experience

- Break the structure a bit (short fragments, weird asides, etc.)

Without that, Clever AI Humanizer gives you a nice, polished “default internet human” voice.

3. Where I actually disagree a bit with the hype

- I don’t think “Simple Academic” is the sweet spot for most real use. For work emails, DMs, and posts, I get much better results with Casual, then manually tightening it. Simple Academic can still feel a bit textbook-y in live contexts.

- Detector scores are over-valued. I tested some texts that got “0% AI” everywhere, but a human prof still circled lines and wrote “sounds generated.” The vibe problem is not fully solvable by any humanizer.

- People keep saying it doesn’t break grammar. True most of the time, but I’ve caught a few subtle tense shifts and slightly off word choices in technical content. If your text is high-stakes (legal, compliance, detailed how-tos), you really do need to read line by line.

4. Concrete issues I’ve hit

- Meaning drift:

- Ex: I had “this can sometimes result in data loss” turned into “this rarely results in data loss.” That’s not a tiny change.

- Tone flattening:

- Sarcastic or strongly opinionated pieces get neutered into something very middle-of-the-road. If you like spicy writing, you’ll have to re-spice it yourself.

- Repetitive “feel” across multiple pieces:

- If you humanize 20+ articles for the same client, they start to all have that same safe, mid-level cadence. I now only use it as a first pass, then rewrite intros / conclusions myself.

5. How I’d use it if I were you

If your goal is “make AI text less obvious” rather than “become invisible to God and Turnitin”:

- Generate your base text with your LLM of choice.

- Run through Clever AI Humanizer in Casual or Simple Academic, depending on context.

- Do a fast but intentional edit:

- Restore any precise phrasing that got softened or changed

- Add 2–3 specific, real details (dates, numbers, personal observations)

- Break up at least a couple of perfect, polished sentences into how you actually talk

- For truly risky stuff (schools, corp compliance), assume it’s AI-assisted in the eyes of policy anyway. Detectors changing tomorrow is always a risk.

6. Is it “worth it”?

- If you’re already generating AI content and just want it to sound less stiff, Clever AI Humanizer is one of the few free tools I’d actually recomend using regularly.

- If you think it’s a one-click ticket to “totally undetectable, perfectly natural, zero effort,” you’re setting yourself up for a nasty surprise.

So yeah: good tool, especially compared to a lot of the junk out there, but still just a tool. Use it as stage 1, not the final stage.

Short answer: it works decently, but it’s not “fire and forget,” and it definitely won’t save bad content.

Here’s my take after using Clever AI Humanizer alongside the setups from @shizuka, @cazadordeestrellas and @mikeappsreviewer, focusing more on how it behaves in day‑to‑day use rather than on detector games.

Pros of Clever AI Humanizer

1. Readability really is its strong point

Where I think it genuinely shines is turning stiff, over-structured AI text into something that feels closer to how people write when they’re not trying too hard. For blog intros, newsletters and LinkedIn posts, it usually gives me copy that I’d rate as “client-ready after a light pass.”

2. Decent tone control in practical contexts

I actually disagree a bit with the heavy focus on “Simple Academic.” In my experience, the more interesting use case is:

- Casual or “Semi-formal”

- Marketing / email / internal docs

In that range, Clever AI Humanizer tends to hit a nice balance: not too chatty, not corporate robot. Compared to juggling prompts in a mainstream LLM, it is faster to get something “good enough” in one go.

3. Good for de-LLM-ing long chunks

When you paste a long, clearly AI-written article, its restructuring is more valuable than its paraphrasing. Paragraph breaks, sentence length, and transitional phrases are more varied. That alone makes the text feel less like straight model output.

4. Better than most free competitors I’ve tried

Without repeating what others already benchmarked to death:

- It usually beats the free “humanizer” widgets baked into grammar tools in terms of flow.

- It also feels less gimmicky than some competitors that rely on fake errors or random slang.

If you care about readability first, and AI detection second, Clever AI Humanizer is one of the few tools I’d actually keep in the toolbox.

Cons & where it annoyed me

1. It can flatten your personality

Everyone mentioned “generic feel,” and I’d push that even harder. If you already have a strong personal voice, Clever AI Humanizer will often:

- Remove edge and humor

- Neutralize strong opinions

- Turn spicy lines into “safe corporate”

If you’re using it for brand or thought-leadership content, you must go back and re-inject your quirks. Otherwise all your posts end up sounding like the same middle-of-the-road internet copy.

2. Meaning drift is real, especially on technical stuff

I ran into the same issue @mikeappsreviewer hinted at, but a bit more aggressively:

- Hedging words changed

- “Sometimes” became “often” or “rarely”

- Conditional phrasing became overconfident

For fields like security, medicine, finance or policy, that is not a small problem. I would not trust Clever AI Humanizer on high-stakes content without a slow, line-by-line review.

3. Detection comfort is overblown

Yes, it often scores low on public detectors. That does not mean:

- Institution-specific or enterprise detectors will always agree

- A human reviewer cannot still tag it as AI-influenced

I’ve seen cases where a piece that “passed” tools was still challenged by a professor based on style patterns. So if the whole point is “submit this as purely human work” in a context with clear rules against AI assistance, you are rolling the dice.

4. Inconsistent handling of short copy

For short texts like:

- Email subject lines

- Ad copy

- Microcopy in apps

Clever AI Humanizer sometimes overcomplicates things. It occasionally expands crisp lines into softer, vaguer phrasings. In those cases a targeted rewrite in a regular LLM with good prompting gave me cleaner results.

How it compares to what others have said

- I’m more skeptical than @mikeappsreviewer about using detector results as any kind of main metric. They are useful as a loose sanity check, not as proof of “human-ness.”

- I agree with @shizuka on mixed academic performance. In that world, policies are changing faster than tools. I’d treat any humanizer as a cosmetic layer, not a shield.

- With @cazadordeestrellas I’m aligned on the “use it as stage 1” mindset, but I’d go further and say: for important content, it’s stage 0.5. You still need to rewrite all the key bits you care about.

When I’d actually recommend Clever AI Humanizer

Clever AI Humanizer makes sense if:

- You already have AI-generated text and it reads like a stiff blog tutorial.

- You need to ship a lot of low-to-medium stakes content: niche blogs, outreach messages, internal docs, SEO pieces.

- You want to speed up editing, not avoid editing.

In that scenario, it does enhance readability and usually makes your life easier.

I would not rely on it as your final step if:

- You are under strict “no AI” rules.

- Precision of meaning is critical.

- You care deeply about preserving a strong, recognizable writing voice.

Used with those limits in mind, Clever AI Humanizer is one of the more competent tools in this category, especially compared with the other “humanizers” people like @shizuka and @cazadordeestrellas have mentioned. Just treat it like a power-assist, not a cloaking device.